There has been some criticism about the new report/ban system that has been implemented in Minecraft 1.19.1, so we’ve decided to break down the new system and explain how it works and why you should turn it off if you’d like to. We’ll also discuss everything you need to know about it and the impact it will have on the community. Let’s dive in! Continue reading to find out more!

What is Mojang’s new report/ban system?

The new report/ban system in Minecraft 1.19 gives players a way to file reports if they encounter offensive content in world chat. By pressing the ‘P’ button in the pause menu, players can access the social interaction screen. From there, players can select chat messages containing offensive content and choose the category of the report they want to file. Additionally, they can add additional comments. Once a report is filed, Mojang will be notified and will take appropriate action.

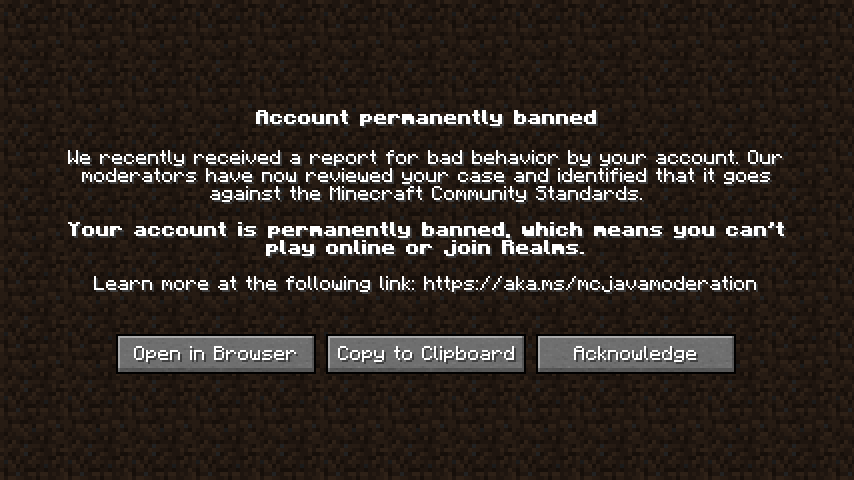

Another big change with this new report/ban system is the fact that it will no longer monitor private Java servers. Mojang also said that Player Report won’t use AI to scan private servers. Moreover, players who are banned from a server will not be banned from single-player mode and won’t have their accounts removed just because they swear.

The Minecraft developer is not backing down from implementing a player reporting system, and Microsoft has announced that it intends to implement it anyway. However, the game community is already protesting, with a massive backlash already underway. The hashtag #SaveMinecraft has garnered a lot of attention. To help prevent abuse on Minecraft servers, follow these steps. If you’re on the fence about the new reporting system, read on.

First, let’s discuss the new Minecraft player reporting system. This feature was announced just a month ago and implemented as part of the 1.19.1 update. It allows players to flag messages that they find inappropriate, and Mojang will review and suspend players if they violate their community guidelines. Some Minecraft fans are worried about player bans because their message was taken out of context. In other words, they fear Microsoft will start dictating the content of the game. Thankfully, there is a way to turn off this new feature if you’d prefer not to have it.

Impact on community

The Minecraft developer, Mojang, has proposed a new reporting system to combat toxic comments and interactions on the game. While the new reporting system may not have the same impact on the community as a manual process, it does help the gamer track abuse and improve the overall experience of the game. While the new reporting system is not intended to punish repeat offenders, it may help to reduce harmful interactions between younger and older players. Nevertheless, Mojang has not announced if the new reporting system will be a permanent solution.

The introduction of the new reporting system has raised several concerns about its impact on the Minecraft community. While this system has been in place for a few weeks, players have been upset by its implementation. Some have called it the dumbest update in the game’s history. Others have argued that this new system is unnecessary and should be restricted to chat. Some players also have expressed their anger at the new system. However, others argue that the new reporting system is beneficial and will improve the game’s community-building experience.

Below you can find the different categories for which a player can report other players.

Player Report Categories

- Imminent harm – Self-harm or suicide.

- Someone is threatening to harm themselves in real life or talking about harming themselves in real life.

- Child sexual exploitation or abuse.

- Someone is talking about or otherwise promoting indecent behavior involving children.

- Terrorism or violent extremism.

- Someone is talking about, promoting, or threatening with acts of terrorism or violent extremism for political, religious, ideological, or other reasons.

- Hate speech.

- Someone is attacking you or another player based on characteristics of their identity, like religion, race, or sexuality.

- Imminent harm – Threat to harm others.

- Someone is threatening to harm you or someone else in real life.

- Non-consensual intimate imagery.

- Someone is talking about, sharing, or otherwise promoting private and intimate images.

- Harassment or bullying.

- Someone is shaming, attacking, or bullying you or someone else. This includes when someone is repeatedly trying to contact you or someone else without consent or posting private personal information about you or someone else without consent (“doxing”).

- Defamation, impersonation, false information.

- Someone is damaging someone else’s reputation, pretending to be someone they’re not, or sharing false information with the aim to exploit or mislead others.

- Drugs or alcohol.

- Someone is encouraging others to partake in illegal drug related activities or encouraging underage drinking.

What’s Next?

After the recent release of the Minecraft 1.19.1 update, Mojang has responded to criticism over its new player reporting system. The new system will allow players to report other players who engage in pranks or other abusive behavior. Many players are opposed to this new system and have voiced their displeasure with it. Hopefully, this new system will alleviate their concerns and improve their experience on the game.

The new reporting system is a great move for players, but not without its faults. It is difficult to monitor every single player on a Minecraft server, and players should feel free to report abuse or bullying. Having a player reporting system will make everyone more protected. This new system can prevent players from hurting others in the process. It also improves the quality of gameplay by making the server safer.

Leave a Reply